AI infrastructure in data centres: cooling and power supply

by J. Groh - 2026-05-04AI infrastructure is growing rapidly, yet it remains invisible to many users. Whilst applications are becoming increasingly prevalent in everyday life, the actual processing power is generated in data centres.

There, thousands of chips operate in parallel, powered and embedded in complex cooling systems. Without this foundation, scalable use would not be possible. Rittal addresses precisely this point and places the technical foundation at the centre. Instead of looking at individual components, the infrastructure is understood as a complete system. Power supply, server racks and cooling are interlinked – and must function reliably under increasing demands. Developments show that the bottleneck no longer lies in computing power alone, but in its technical integration.

Rittal infrastructure for AI data centres as a complete system

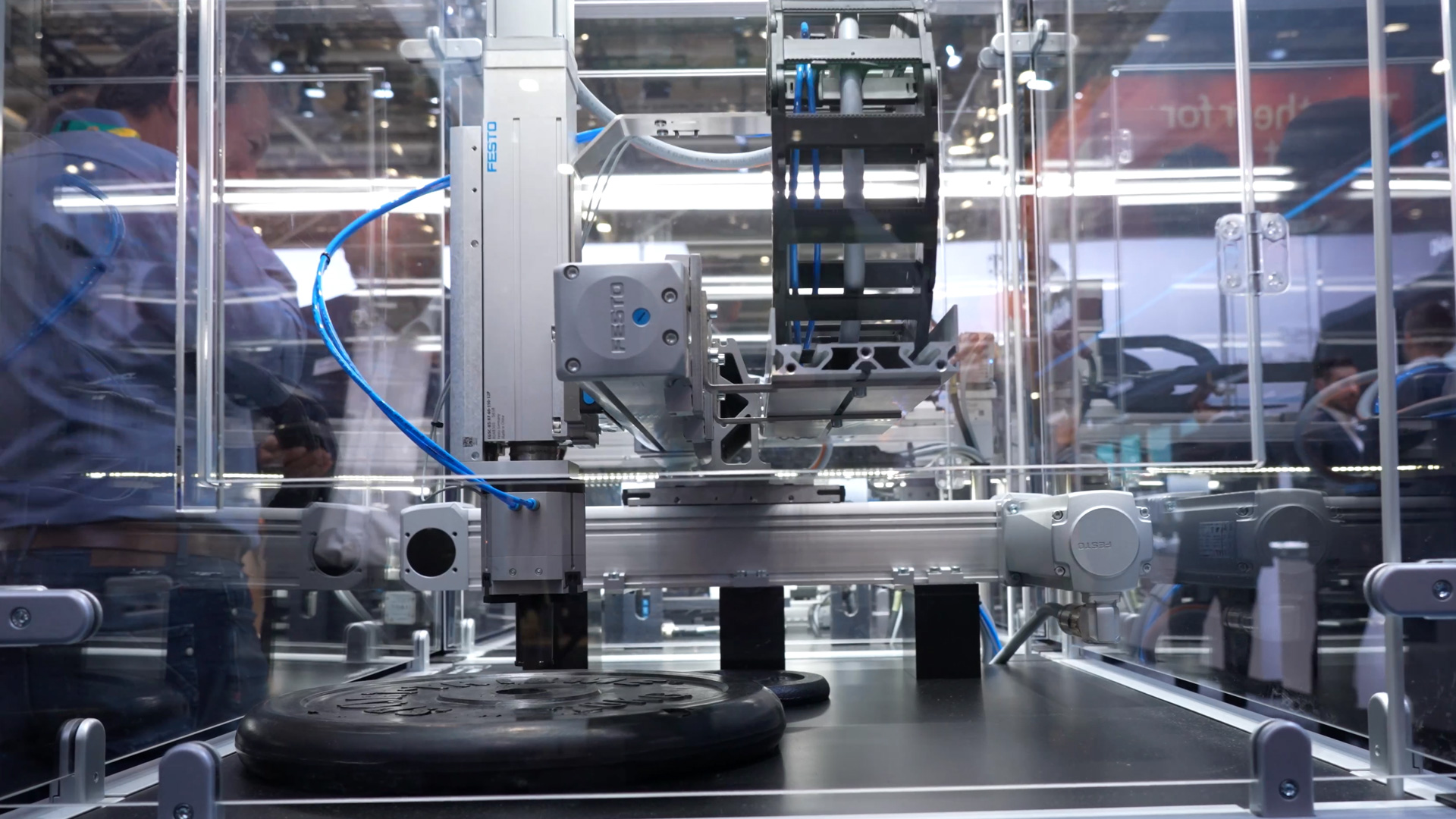

Rittal deliberately positions its solution not as a standalone product. The focus is on an integrated approach that covers all relevant areas of an AI data centre. These include power supply, rack structures and the thermal dissipation of the heat generated. The challenge is clearly defined: AI chips require enormous amounts of energy. This energy is almost entirely converted into heat. Consequently, the technical complexity shifts from pure computing power to the infrastructure that enables this performance in the first place. The concept shown relies on modularity and scalability. Systems can be adapted to different performance requirements and scale up as demand increases. This flexibility becomes a decisive factor, particularly for AI applications where demand evolves dynamically.

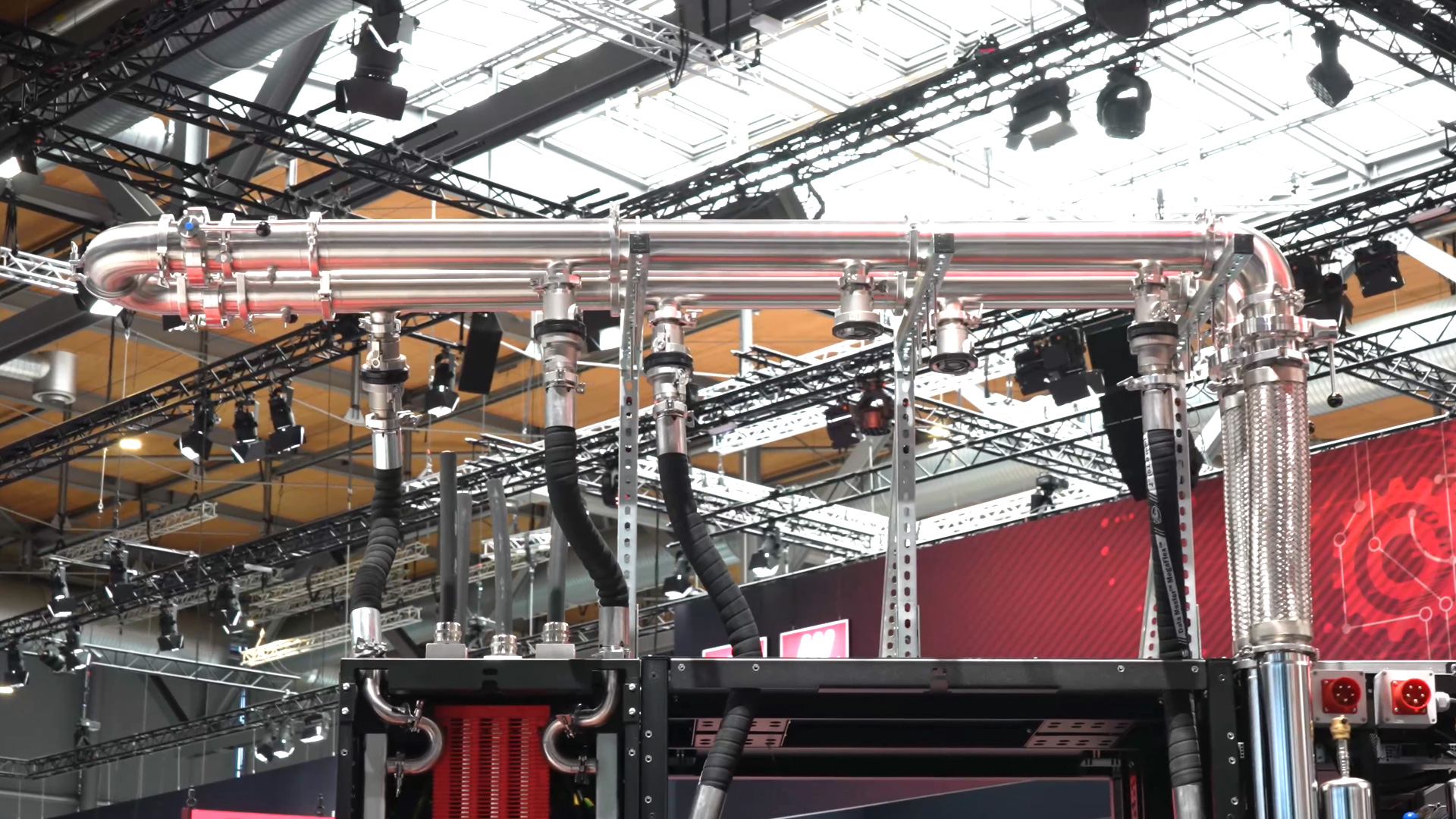

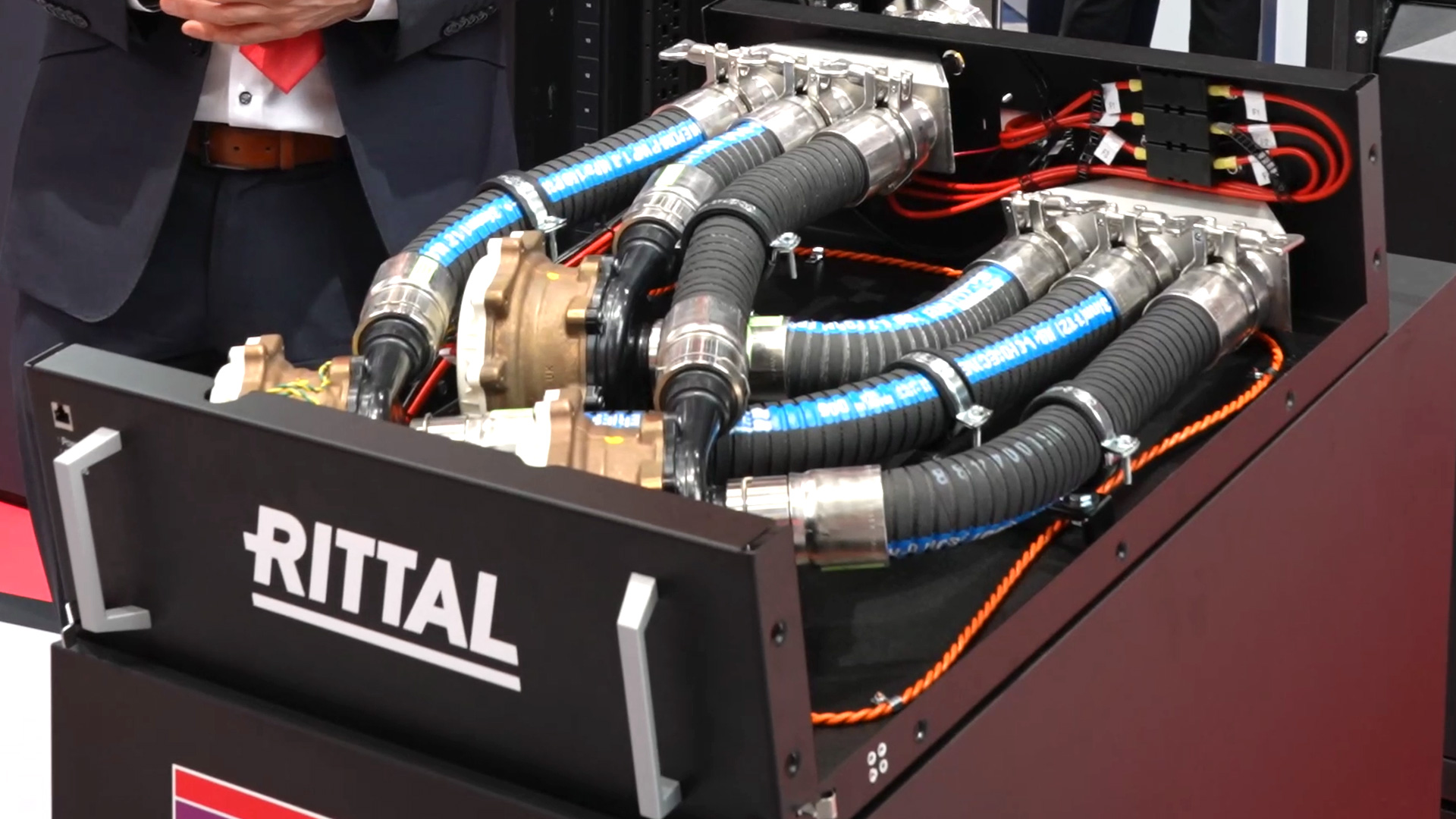

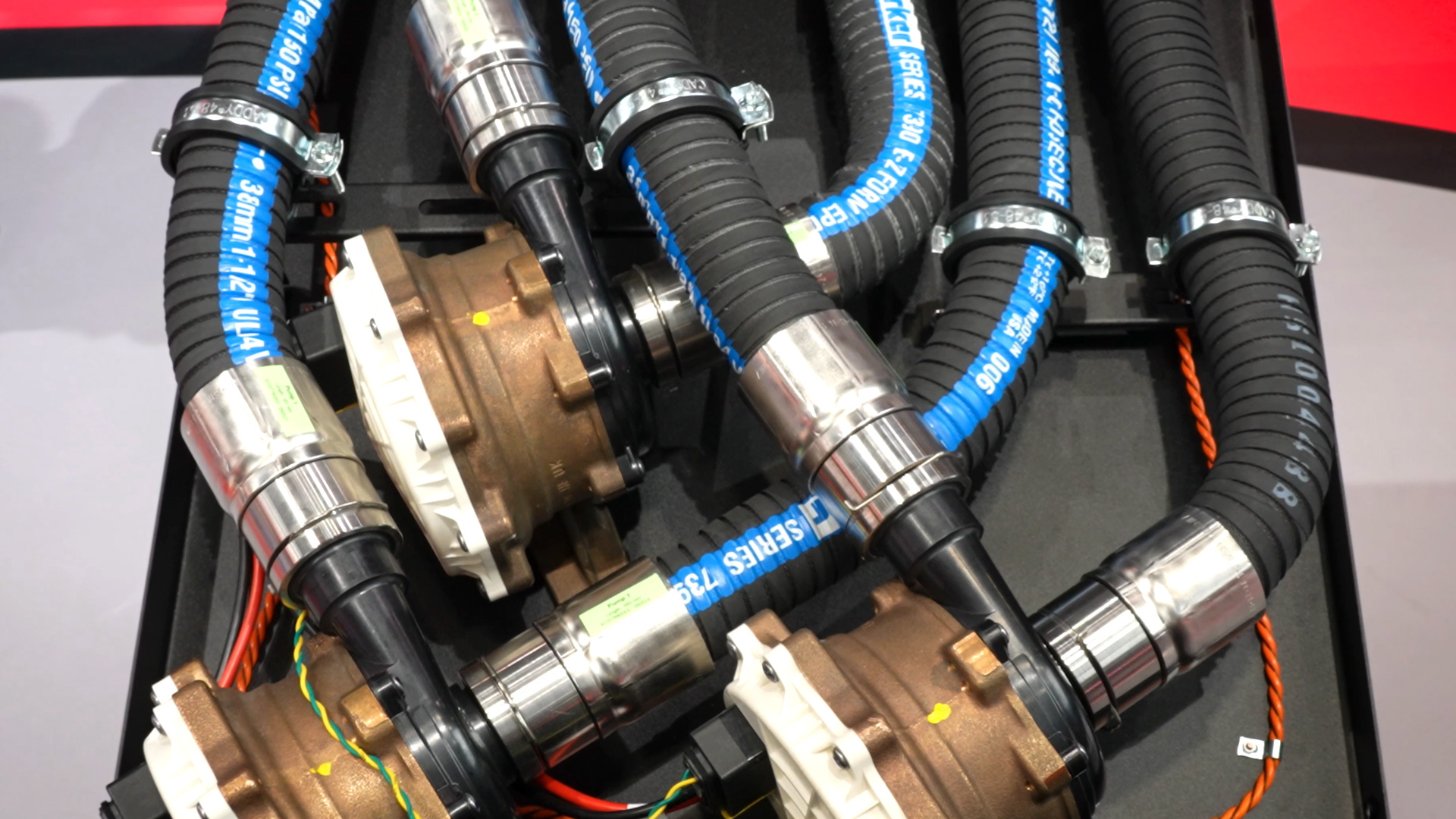

Water-based cooling for high performance in data centres

Cooling represents the central bottleneck in modern data centres. Air-based systems reach their limits at high power densities. Rittal is therefore demonstrating a consistent shift towards water-based cooling. The scale is considerable: around 1,500 litres of water per minute are required for approximately one megawatt of cooling capacity. These figures illustrate just how much the requirements have changed. Cooling is no longer merely a supporting function, but an integral part of the system architecture. A pump cassette with several pumps operating in parallel ensures the necessary flow rate. In the configuration shown, a total of 15 pumps work together. This redundant design is necessary to ensure stability even under continuous load. The decision to use water as the cooling medium is technically justified. Other methods no longer achieve the necessary efficiency at these power densities. At the same time, this increases the demands on design and safety, as the interaction between water and electronics must be designed with particular care.

Power supply and increasing power in AI systems

Alongside cooling, the focus is shifting to power supply. The power density of modern systems is growing so rapidly that existing concepts are reaching their limits. Power outputs in the region of one megawatt are already expected in individual control cabinets. By way of comparison: This amount of energy corresponds to the demand of an entire block of flats. This fundamentally changes the requirements for the electrical infrastructure. Conventional AC solutions will no longer suffice in the long term. The trend is moving towards higher voltages and DC technology. Current systems already operate at 400 volts, whilst future developments are targeting 800 volts. This transition is necessary to reduce losses and ensure an efficient power supply. The combination of high power consumption and continuous heat generation makes it clear that energy and cooling cannot be considered in isolation. Both systems must be precisely coordinated with one another.

Computing racks and AI chips at the heart of the infrastructure

At the heart of the infrastructure are the computing racks in which the AI chips are installed. They form the core of the system, yet are entirely dependent on the surrounding structures. Without a stable power supply and reliable cooling, sustained performance cannot be guaranteed. Rittal highlights its role as a supplier of these rack systems. With an annual production of around 200,000 units, the industrial scale becomes clear. Demand is rising continuously, in parallel with the expansion of AI applications across a wide range of industries. The requirements placed on the racks themselves go beyond mere housing functions. They must accommodate high power densities, enable the integration of cooling components and, at the same time, ensure mechanical stability. This makes them central elements within the entire infrastructure.

Requirements for AI infrastructure: balancing technology and scalability

The development of AI infrastructure shows a clear shift in priorities. Whilst computing power was long considered the decisive factor, energy efficiency, cooling concepts and system integration are now coming to the fore. The key requirements can be summarised as follows:

- high power density combined with a stable power supply

- efficient cooling via water-based systems

- modular infrastructure for scalable data centres

- close coordination between rack, power and cooling

Development of AI infrastructure in an industrial context

With the increasing use of AI, pressure on the underlying infrastructure is also growing. Applications are becoming more complex, data volumes are rising, and data centres must be continuously expanded. At the same time, energy consumption and cooling requirements are increasing to an extent that demands new technical solutions. Rittal addresses this transformation with a systemic approach. Instead of isolated products, an integrated infrastructure is created that is coordinated and can be flexibly expanded. This development shows that the success of AI applications will in future depend more on the quality of the infrastructure than on pure computing power. This makes it clear: the real innovation lies not only in the algorithm, but in the ability to operate it reliably under real-world conditions.